What is Lasp?

Lasp is a programming model for building correct distributed applications.

Lasp is about writing correct distributed programs.

The goal of Lasp is to allow you to write a program once and know that it will behave the same whether it is executed on one computer or distributed across many.

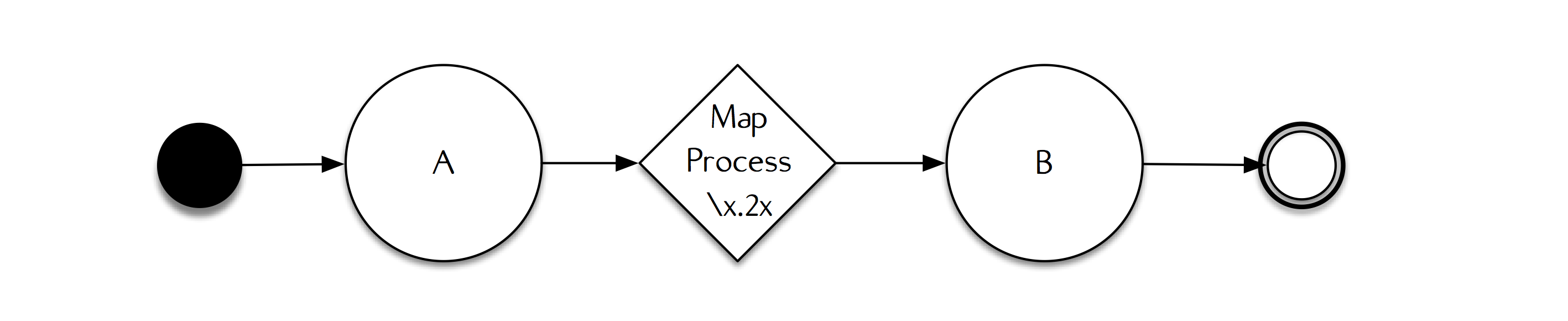

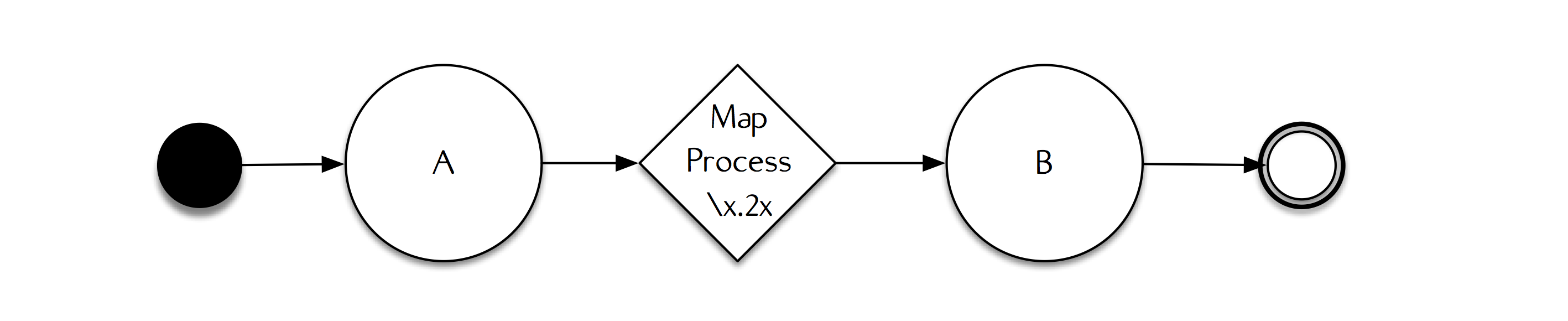

To demonstrate, consider the following dataflow program running on a single node.

In this example, both A and B are sets, and every time A changes we will repopulate B with the new value of the transformation applied to A.

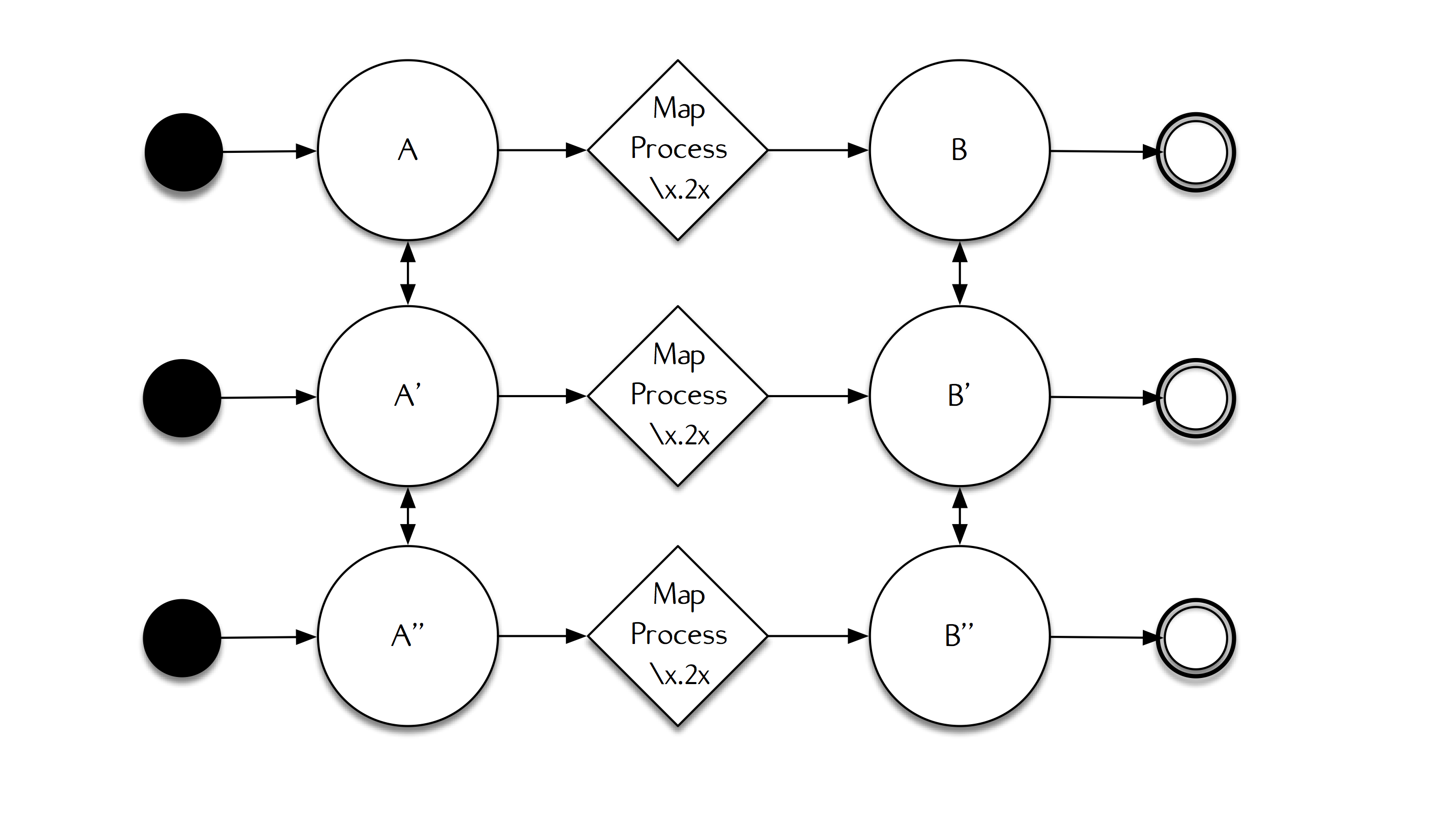

Now, consider if we replicate this computation on every node in the cluster and each of these nodes is going to communicate with other nodes in the cluster to propagate changes to their copies of both A and B.

In this diagram, unidirectional arrows indicate the direction that data can flow, and bidirectional arrows indicate replication between objects, where changes can propagate in either direction, between different replicas running on different nodes. In this example, changes can freely propagate between replicas of A and replicas of B through a background anti-entropy protocol that ensures each replica is kept up-to-date with its peer replicas.

Now, because of the realities of distributed systems, there's multiple schedules that events can take based on network availability and who the nodes choose to randomly propagate changes with each other.

What's a schedule?

When we say schedule, we refer to one possible order all of the messages in a given execution might be delivered in. In distributed systems, there exists an extremely large space of possible schedules, where only some of those schedules may or may not produce the same outcome.

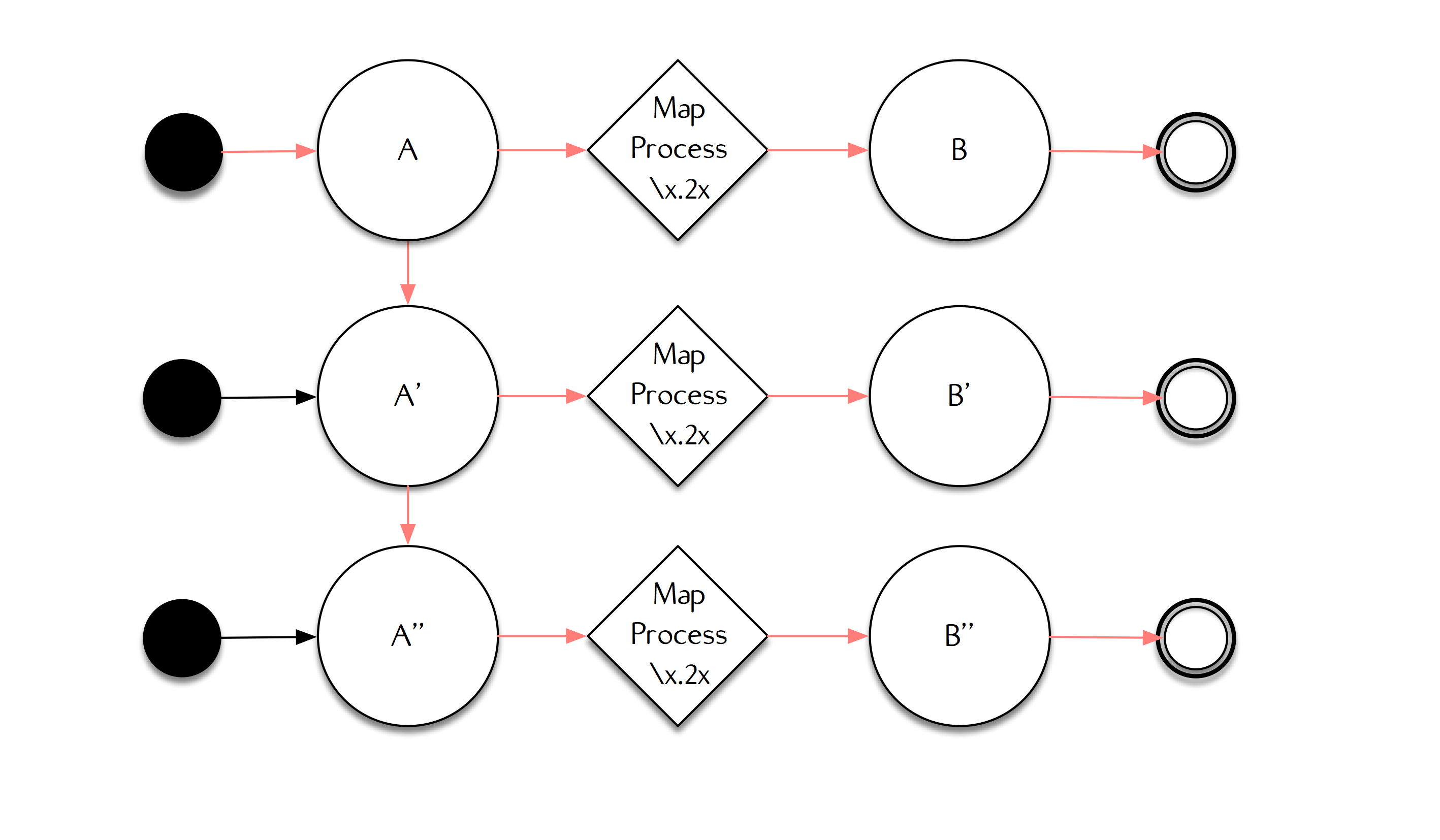

For instance, consider the following schedule.

In this schedule, designated by the red color, each node propagates their value of A to other nodes in the system, then each apply the transformation to produce a new value of B.

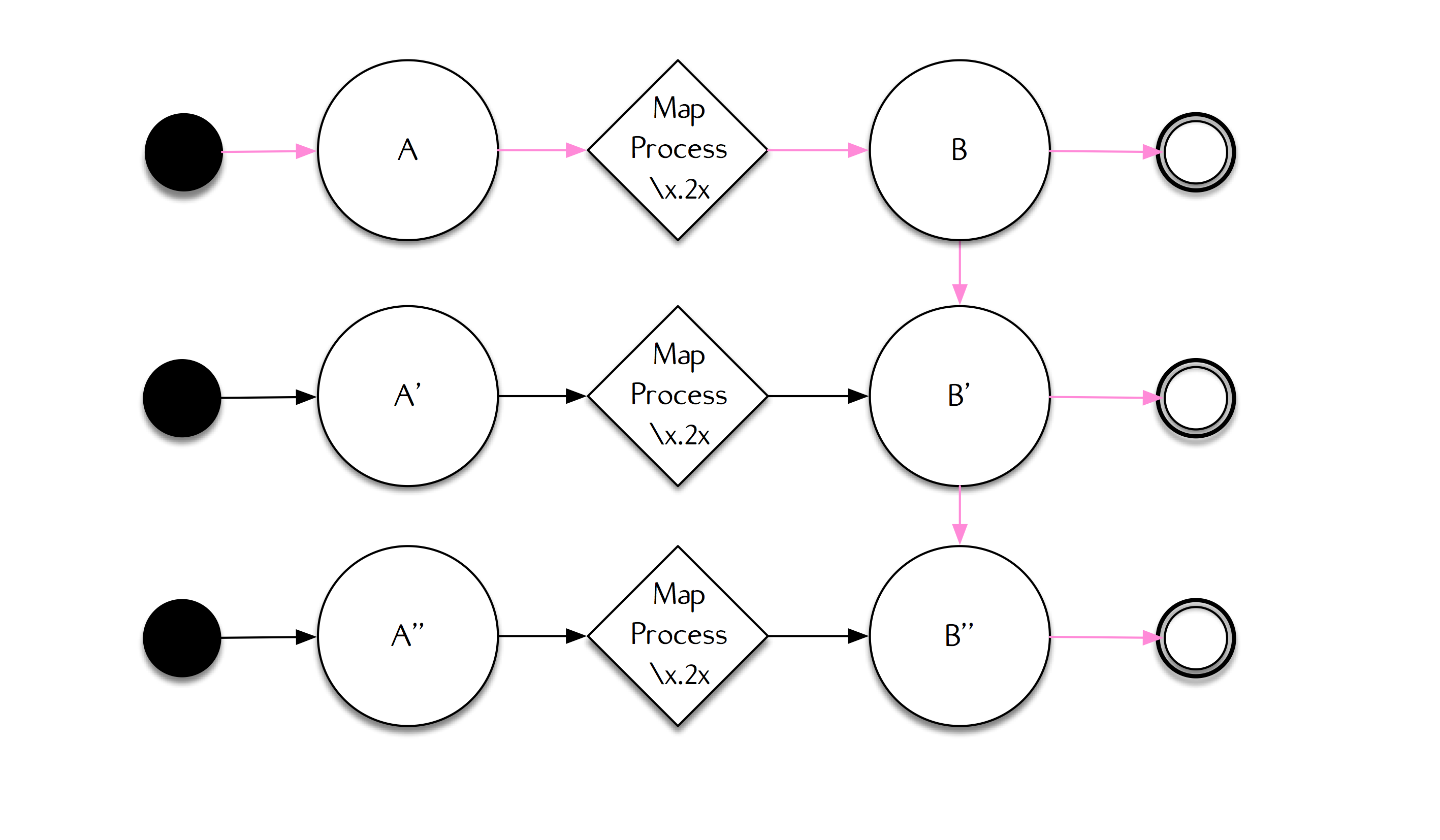

But, let's consider another completely valid schedule.

In this schedule, designated by the purple color, each node propagates their value of B after the first node in the system transforms its A into its value for B.

These different schedules are just a few of the many ways that information may flow through a distributed system, and it can be complicated to reason about all of the ways that a distributed computation might resolve. Lasp removes this complexity completely, and ensures that your programs compute the same results for all schedules.

Regardless of schedule, they are reducible, or equivalent, to the original sequential specification.

Developers no longer need to worry about the order in which messages are delivered or worry about any of the specific details around data replication and distribution. Furthermore, in Lasp, altering the propagation strategy or network topology does not change program behavior.

Lasp is not [yet!] as expressive as common languages like Python or Java, or even recent languages like Go or Rust. To achieve its properties, Lasp guides the programmer to use Conflict-free Replicated Data Types (CRDTs) and to program using operations with special mathematical properties (monotonic, order-preserving homomorphisms).

Special thanks for Frank McSherry for his valuable input on this article.

Updated less than a minute ago